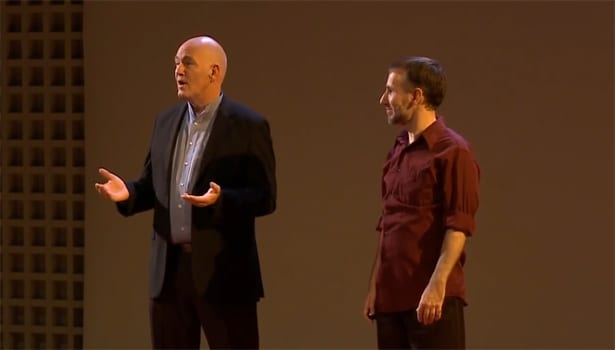

Bob Iger (Disney) Comments on Disney’s New Streaming Services and the unbundling of Cable TV content (short video)

In this short video, Bob Iger, Chairman and CEO of The Walt Disney Company, discusses Disney’s New Streaming Services and the future of Digital Media and the unbundling of Cable TV content.

Bob Iger has something to say about how Disney is looking ahead at this inevitable future. Disney owns some (most?) of the most valuable entertainment content assets out there and from that perspective, Bob Iger’s comments are invaluable. Disney, which also includes ABC, ESPN, Pixar, and the new Star Wars and Marvel franchises, according to Bib Iger, is well-positioned for the general move towards cable unbundling. However, the major Pay-TV providers business current models around bundling, including Disney, are certainly being disrupted by OTT (digital over the top). As we all know, OTT has been touted as the Holy Grail for the future of TV and video.

Which would prefer? Pay for only the content you consume or overpay for content bundles that include programming that you don’t care about? Sounds like a no-brainer so why not cancel your cable subscription in favor of Netflix, Amazon (Prime) or Hulu? Perhaps ~$10 a month vs. ~$70 a month? According to Tech Crunch “In a simplistic generalization, unbundling would remove the subsidization of pay TV, banishing the requirement for every pay TV subscriber to bear the cost of content that only a portion of the subscriber base actually wants and consumes, e.g., sports content, typically the most expensive channels.”

Watch this short video to hear what Bob Iger has to say about unbundling contnet, the current and future state of the entertainment industry, and what Disney’s position is on all of the above.

“Bob Iger (Disney) Comments on the unbundling of Cable TV content)” is also available on 4thWEB’s Facebook Channel